A year ago, the best AI models sat down with the USA Math Olympiad — the proving ground for America’s most gifted high school mathematicians — and mostly embarrassed themselves. Circular arguments. Unsupported guesses. Solutions that wandered off into the algebraic wilderness and never came back. The results were, by the researchers’ own frank admission, disastrous.

Then came 2026.

GPT-5.4 just scored 95% on USAMO 2026. Not in a domain it was explicitly trained to ace, not on sanitized textbook problems — on fresh, unseen competition mathematics designed specifically to be hard for humans who have spent years preparing for it. Nearly perfect. One year after near-zero.

The researchers who ran this evaluation — partially supported by a Google grant — are careful not to oversell it, and so should we be. But even with appropriate restraint, the trajectory here is worth sitting with for a moment. The gap from “disastrous” to “essentially saturates the benchmark” is not a gradual curve. It’s a cliff edge.

What’s particularly telling isn’t the top-line score. It’s the nature of the errors that disappeared. In 2025, models were guessing, reasoning in circles, producing irrelevant walls of text. By 2026, those basic failure modes are largely gone. The models that score poorly now fail for different, more sophisticated reasons — running out of tokens on a single hard problem, or reconsidering their approach mid-proof and slipping back into exploratory thinking instead of finishing the argument. These are the failures of a mathematician who genuinely grappled with something difficult, not of a system that never understood the question.

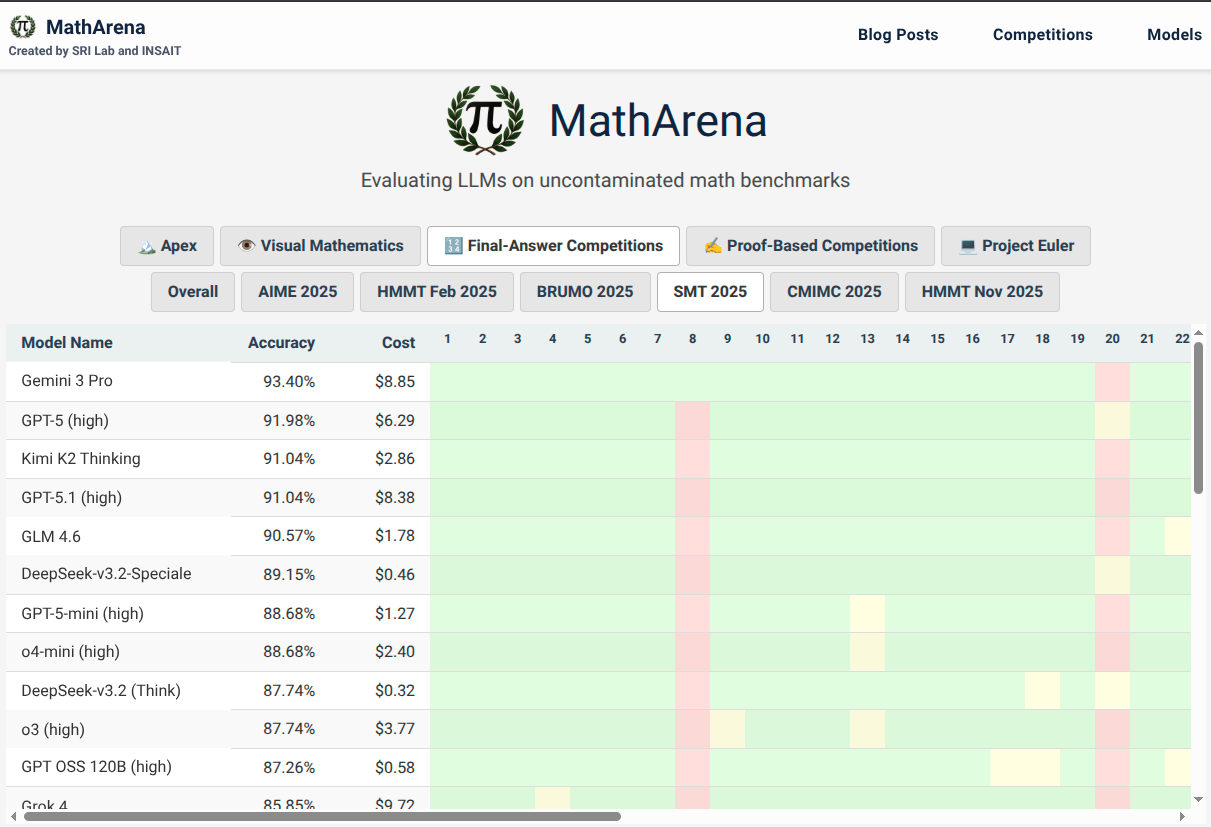

The gap that remains is the story within the story. GPT-5.4 aside, the field is still deeply stratified. Gemini-3.1-Pro clears 74%, Opus-4.6 sits at 47%, and the best open-source model — Step-3.5-Flash — reaches 44.6%. These aren’t terrible scores on one of the hardest mathematics competitions in the US. But next to a near-perfect run, they look like a different era of technology entirely.

The hardest problem for LLMs this year was Problem 3, a geometry proof requiring a chain of non-obvious synthetic insights. The average score across all models was 25%. GPT-5.4 was the only model to score full marks. Tellingly, it did so not through elegant geometric reasoning — the expected route — but through sheer coordinate-bashing, grinding out pages of algebra until the answer emerged. Less beautiful than the intended solution. But it worked, and nobody else got there.

Problem 2, a combinatorics puzzle about merging powers of 2, laid bare an interesting divide in how different models think. The elegant human approach was constructive: build a strategy, track an invariant, prove it. Nearly every model that tried this route failed. The successful solutions came from the stronger models and went the other direction entirely — reframing the problem as a binary tree, then invoking the Kraft-McMillan inequality from information theory. A computer-science mind solving a math competition problem. Whether that’s clever or a tell, you can decide.

One of the more candid admissions in the paper concerns Opus-4.6 specifically. In 4 out of 24 responses, it hit its 128,000 token limit without producing a finished proof — three of those failures on a single problem. The researchers chose not to rerun those samples. On one hand, that’s honest methodology; the hyperparameters were set as recommended. On the other, it means Opus-4.6’s 47% score has a small asterisk: those incomplete responses were scored as zero, not as partial credit for what they did produce.

The grading pipeline itself is worth examining, because running an automated proof-grading system at scale introduces its own uncomfortable problem: the judges know whose work they’re reading. Or at least, they might. Self-bias — models scoring their own outputs more generously — is a known pathology. Formatting bias adds another wrinkle, where a neatly structured proof gets bumped up just for looking tidy.

The solution was a three-model jury: GPT-5.4, Gemini-3.1-Pro, and Opus-4.6. If they agreed within two points, the minimum of the three scores was taken — because in the researchers’ experience, LLM judges lean generous. If they disagreed by more than two points, each judge saw all three initial verdicts and was asked to reconcile them. Then again, the minimum.

The self-bias problem resolved itself in an unexpected way: because GPT-5.4 scores nearly perfectly, there’s not much room to inflate its own results. Gemini-3.1-Pro and Opus-4.6 both substantially overestimated their own performance — which is almost poignant in its familiarity.

After all the automated grading, a human team reviewed every solution by hand. What they found was encouraging: the jury’s scores tracked the final human-verified scores closely, with most disagreements being minor. A few problems had grading schemes that were too rigid or ambiguously phrased, catching the judges out in ways the humans caught and corrected. The machinery was robust. It just needed supervision.

What’s genuinely worth paying attention to is the qualitative difference in how these models write mathematics. GPT-5.4’s proofs are apparently the easiest to follow — clear, precise, well-organized, making appropriate use of lemmas and subarguments. Opus-4.6 tries hardest to look polished: careful headers, bolded subsections. Gemini-3.1-Pro is more conversational, walking readers through reasoning step by step. Among open models, Qwen3.5-397B is so concise it skips steps; GLM-5 is so verbose it sometimes loses the thread; Step-3.5-Flash is structurally similar to GPT-5.4 but less refined — and occasionally breaks sentences in the middle with unexpected newlines, which is the kind of small, strange tell that reminds you these systems are doing something quite different from what they appear to be doing.

USAMO, at this point, probably has limited runway left as a meaningful benchmark for the top models. That’s not a criticism of the competition — it’s a genuinely hard test, and a 95% score is only possible because one model is genuinely exceptional while others still lag significantly. But the pattern holds: IMO, Putnam, Miklós Schweitzer. One by one, the competitions that seemed like reliable upper ceilings have turned into measuring sticks for the gap between models rather than barriers for all of them.

A year ago, this score would have been headline news. Today, it lands more like confirmation of something people were already starting to expect. That shift in expectation might be the most significant development here — not the score itself, but the fact that it no longer surprises us.

A year ago, the best AI models sat down with the USA Math Olympiad — the proving ground for America’s most gifted high school mathematicians — and mostly embarrassed themselves. Circular arguments. Unsupported guesses. Solutions that wandered off into the algebraic wilderness and never came back. The results were, by the researchers’ own frank admission, disastrous.

A year ago, the best AI models sat down with the USA Math Olympiad — the proving ground for America’s most gifted high school mathematicians — and mostly embarrassed themselves. Circular arguments. Unsupported guesses. Solutions that wandered off into the algebraic wilderness and never came back. The results were, by the researchers’ own frank admission, disastrous.